The Biggest AI Deal of 2026 Wasn't About AI

On March 17, 2026, IBM closed an $11 billion acquisition. Not of an AI model company. Not of a chatbot startup. Not of a GPU manufacturer. They bought Confluent, a data streaming platform used by over 6,500 enterprises, including 40% of the Fortune 500.

Eleven billion dollars for a company that moves data from point A to point B in real time.

If that sounds boring compared to the AI headlines you read every day, you are looking at exactly the right signal.

Why Data Infrastructure for AI Matters More Than the Model

Here is the uncomfortable truth about enterprise AI in 2026. Only 14% of enterprises report data architectures fully prepared for AI workloads. Nearly 88% of AI proof-of-concept initiatives fail to reach production. And the primary constraint is not model capability. It is data readiness.

These are not fringe stats from an obscure survey. They come from Coalesce, HyperFRAME Research, and Cloudera. The pattern is consistent across every analyst report published this quarter. The models work. The data does not.

I talk to data leaders every week. The story is always the same. They evaluated three AI vendors. They ran a proof of concept. It worked beautifully on a curated demo dataset. Then they plugged it into their real production data and everything fell apart. Duplicate records. Conflicting definitions. Stale information. Missing fields. The AI did not fail. The data foundation did.

IBM understood this before most of the market caught up. Their press release did not mention large language models or foundation models or generative AI breakthroughs. It said: "Making real-time data the engine of enterprise AI and agents."

Read that again. Real-time data. The engine. Not the model. Not the parameters. Not the context window. The data.

I Have Seen This Pattern Before

In 2018, every company was hiring data scientists. I co-founded DataBright that year because I saw what came next. The data scientists arrived and found nothing to work with. No clean pipelines. No governed datasets. No single source of truth. They spent 80% of their time cleaning data and 20% doing actual analysis.

By 2022, the same thing happened with dashboards. Companies bought Looker, Tableau, Power BI. They hired analysts. Then they discovered that different teams defined "revenue" differently. The dashboards showed conflicting numbers. Nobody trusted them. I built a Looker practice at DataBright precisely because the semantic layer was the missing piece nobody wanted to talk about.

Now it is 2026, and the pattern is repeating with AI agents. Companies deploy autonomous systems that need to make decisions in real time. The agents are brilliant. The data flowing into them is stale, ungoverned, and contradictory.

Three cycles. Same lesson. The intelligence layer is only as good as the data foundation underneath it.

What makes 2026 different is the cost of getting it wrong. A bad dashboard shows the wrong number. A bad AI agent executes the wrong decision. Autonomously. At scale. In production. The failure mode has shifted from "misleading report" to "automated business damage."

The Intelligence Allocation Stack Explains the $11 Billion

I use a framework I call the Intelligence Allocation Stack. It has four layers.

Layer 1: Data Foundation. Data governance, data quality, ingestion pipelines, warehousing, single source of truth. This is where Confluent lives. This is where real-time data streaming happens. This is where IBM just parked $11 billion.

Layer 2: Semantic Layer. Business logic translated for machines. Metric definitions. Governed vocabulary. Tools like LookML, dbt Semantic Layer, and Omni operate here.

Layer 3: Orchestration Layer. Data pipelines, CRM syncs, reverse ETL, workflow automation, API integrations. The connective tissue between your data and your applications.

Layer 4: AI Layer. AI agents, conversational AI, autonomous systems, predictive models. This is where everyone wants to be. This is where the venture capital goes. This is where the LinkedIn hype lives.

The problem with most enterprise AI strategies in 2026 is simple. They start at Layer 4 and work backward. They deploy agents before they have governed data. They build chatbots before they have a semantic layer. They automate workflows before their pipelines are reliable.

IBM did the opposite. They invested $11 billion at Layer 1. They bought the foundation, not the ceiling.

What Confluent Actually Does (And Why It Costs $11 Billion)

Confluent built its business on Apache Kafka, the open-source event streaming platform that most large enterprises already depend on. But Confluent turned Kafka into something more: a managed, governed, real-time data platform that streams live operational events across an entire organization.

In the context of AI agents, this matters enormously. An AI agent making decisions about inventory, pricing, customer support, or fraud detection needs data that is seconds old, not hours old. Batch processing, the backbone of traditional data warehousing, gives you yesterday's answers to today's questions.

Confluent gives AI agents a live feed of what is happening right now, with lineage, policy enforcement, and data quality controls built in. IBM's press release specifically called this out: "Every AI model, agent, and automated workflow runs on continuously updated enterprise data."

That sentence describes data infrastructure for AI done right. Real-time. Governed. Continuous. And it is not about the model at all.

Think about what this means practically. A customer service AI agent handling refund requests needs to know the customer's order status right now, not as of last night's batch load. A pricing AI agent needs to see competitor moves and inventory levels as they happen, not from a warehouse that refreshes every six hours. A fraud detection agent that runs on stale data is worse than no agent at all, because it creates a false sense of security.

This is why real-time data infrastructure is not optional for the agentic AI era. It is the prerequisite. And IBM just acquired the company that does it better than anyone else.

The Numbers Tell the Story

Let me put this deal in perspective with some data points I track closely.

88% of companies say they use AI, but only 39% see measurable impact. That gap is not a model problem. It is a data infrastructure problem.

Gartner projects that 60% of AI projects will be abandoned by 2028 because the underlying data is not AI-ready. Abandoned. Not pivoted. Not scaled down. Abandoned.

Only 15% of organizations have mature data governance, according to DATAVERSITY. And 62% report incomplete data while 58% cite capture inconsistencies.

Meanwhile, companies with mature data governance see 24% higher revenue from AI initiatives, per IDC research.

The math is clear. For every dollar spent on AI, six should go to data architecture. IBM seems to agree. Their $11 billion check is the most expensive validation of this thesis I have ever seen.

And here is the Anthropic labor market research that makes this even more urgent. Their study found 94% theoretical AI coverage in business and finance roles, but only 33% observed adoption. That gap is not because the models are not capable. It is because the enterprise data infrastructure cannot support the deployment. The bottleneck has moved from the AI layer to the data foundation layer, and it will stay there until companies redirect their investment accordingly.

What This Means for Your Data Strategy

If you are a data leader reading this, the IBM-Confluent deal should change three things about how you prioritize your roadmap.

First, stop treating real-time data as a nice-to-have. If your AI agents are running on batch-processed data that is hours or days old, you do not have an AI strategy. You have an expensive experiment. Real-time data streaming is not a feature. It is the foundation for any autonomous system that needs to act, not just analyze.

Second, invest in data governance before you invest in AI tooling. IBM did not acquire Confluent just for the streaming capability. They acquired it for governed, real-time data products. Lineage. Policy enforcement. Quality controls. If your data has no governance layer, your AI agents will make confidently wrong decisions at machine speed.

Third, audit your intelligence allocation. Map your current spending across the four layers. If more than 40% of your data and AI budget sits at Layer 4 while Layers 1 through 3 are underfunded, you are building on sand. I have seen companies spend millions on AI agent platforms while their underlying data pipelines break every other week. The companies that will win the AI era are the ones that have the courage to invest heavily in infrastructure that nobody sees and nobody puts on a keynote slide.

This is not about choosing between AI and data infrastructure. It is about sequencing. Build Layer 1 first. Get your data governed, real-time, and reliable. Then your AI agents will have something worth reasoning over. Then your semantic layer will have trustworthy definitions. Then your orchestration layer will move clean, timely data to the right place. Start at one, not at four.

The Boring Stuff Wins

I have been saying this since 2018, when I built DataBright on the conviction that the unsexy work of data engineering and governance would become the most valuable capability in tech. We scaled that business and it was acquired in 2023.

The thesis has not changed. The stakes have.

In 2018, bad data meant bad dashboards. In 2022, bad data meant conflicting metrics. In 2026, bad data means autonomous agents making wrong decisions at scale, in production, affecting real customers and real revenue.

IBM just told the market what I have been telling clients for years. The data infrastructure layer is not a cost center. It is the competitive advantage. They did not say it in a blog post or a conference keynote. They said it with $11 billion.

Systems beat individuals at scale. And the system starts at Layer 1.

More from Unwind Data

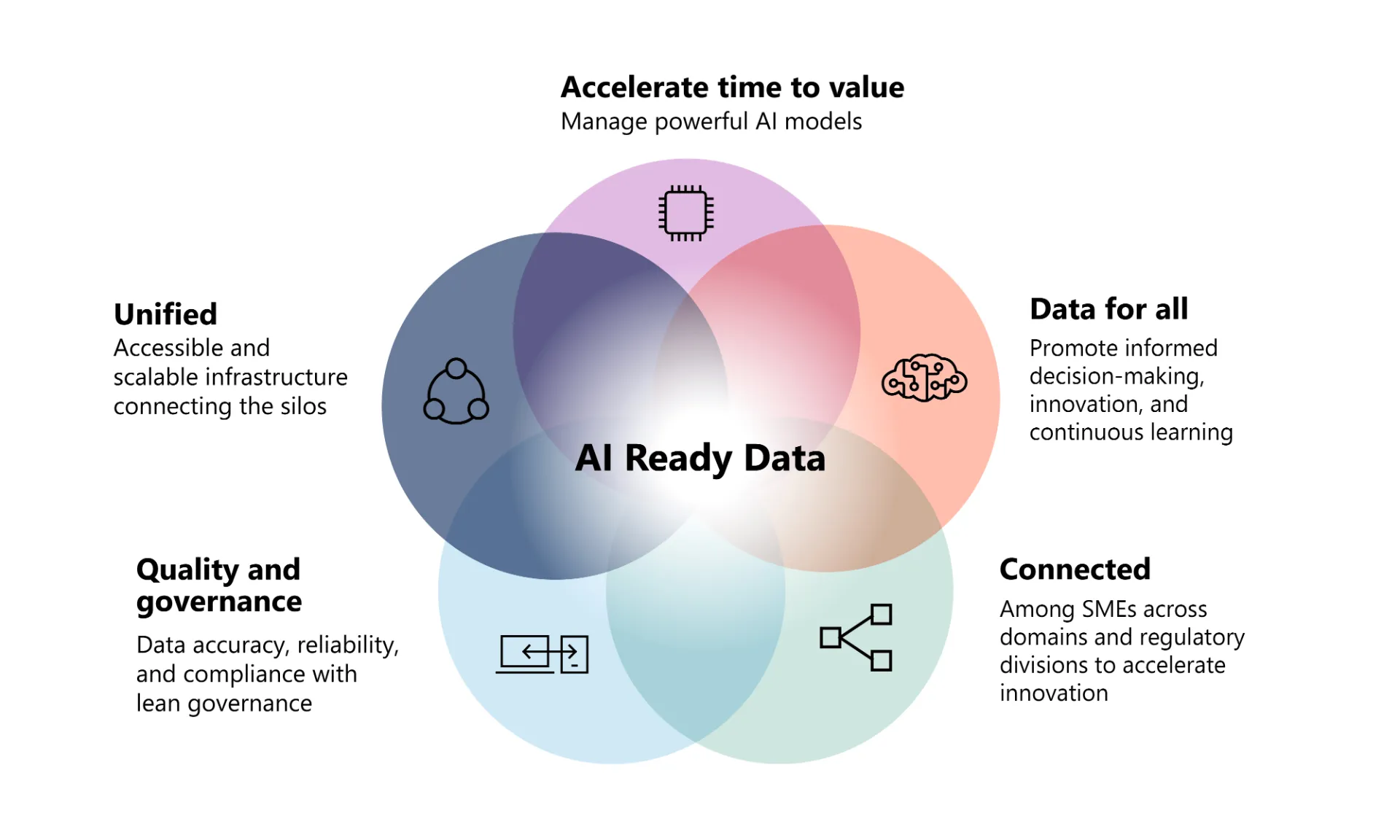

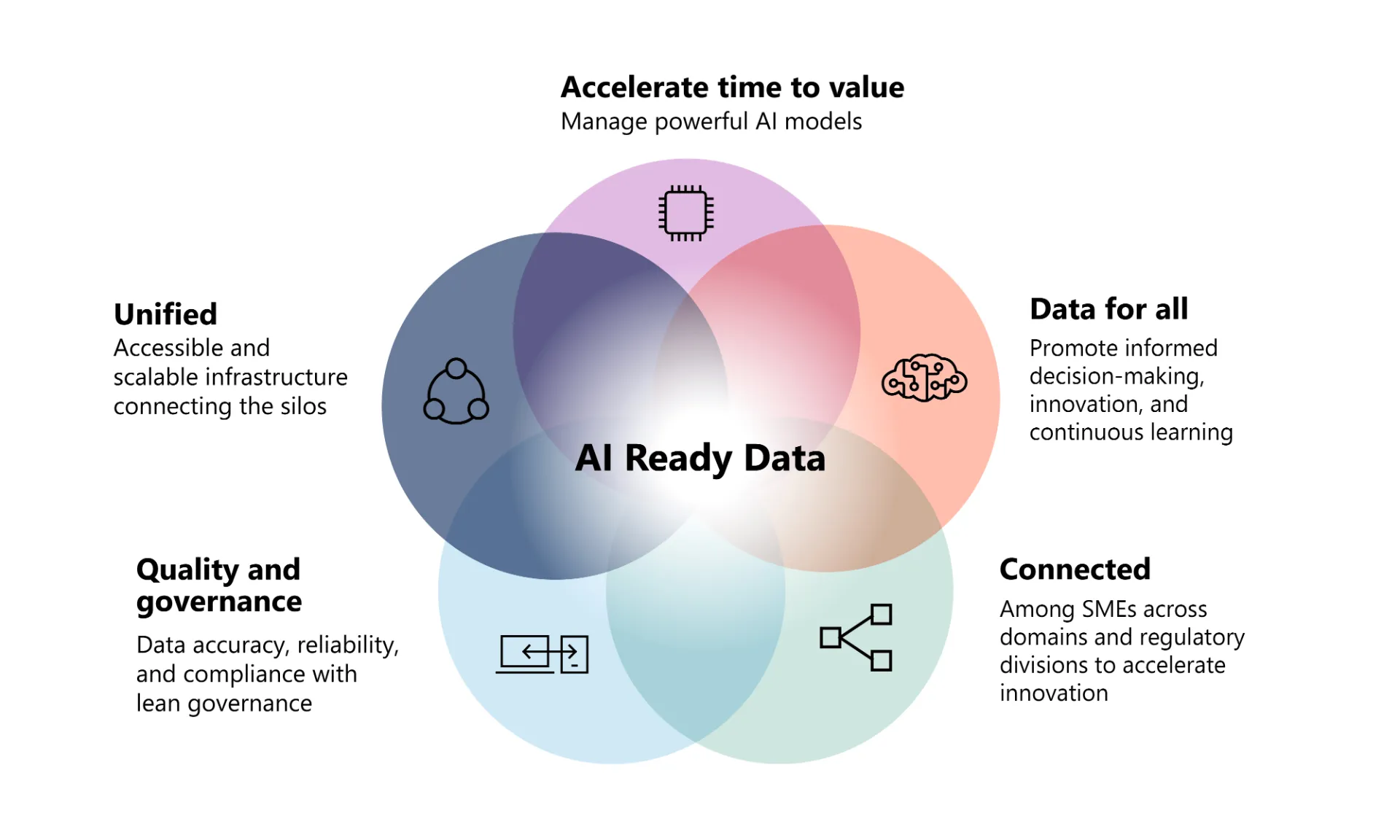

AI-Ready Data: Frequently Asked Questions

Answers to the most common questions about AI-ready data, data governance for AI, the semantic layer, and what it takes to build a data foundation that makes AI reliable.

AI-Ready Data Foundation: What It Takes Before You Deploy

An AI-ready data foundation is a governed, consistently defined data infrastructure that lets AI models and agents operate reliably at scale.

Ready to unlock your data potential?

Let's talk about how we can transform your data into actionable insights.

Get in touch