Data Governance for AI: The Foundation Your Models Need

Data governance for AI ensures your models, agents, and automations run on trusted, consistent data. Learn what it takes to build a governance layer that makes AI reliable.

Talk to an expert88% of companies are using AI. Only 39% see measurable impact. The gap between adoption and results is not a model problem. It is a governance problem.

Data governance for AI is not a compliance checkbox. It is the architectural discipline that determines whether your AI models, agents, and automations run on trusted data or confident guesses. Companies that skip this step build AI that hallucinates with authority, because nobody governed what went in.

What is data governance for AI

Data governance for AI is the practice of managing data accuracy, consistency, lineage, and access so that AI systems can operate reliably. It covers how data enters your organization, how it gets defined, who owns it, and how changes are tracked.

Traditional data governance focused on compliance and reporting. Data governance for AI goes further. AI models consume data at scale, across departments, without human review of each input. If your revenue metric means one thing in finance and another in marketing, a dashboard shows two numbers. An AI agent picks one and acts on it. The consequences compound faster.

In practical terms, data governance for AI means every dataset your models touch has a defined owner, a documented schema, a quality threshold, and a clear lineage from source to consumption. If you are new to this space, the fundamentals of data governance are a useful starting point before diving into AI-specific requirements.

Why data governance matters for AI

Gartner estimates that 60% of AI projects will be abandoned because the underlying data is not AI-ready. Not because the models failed. Because the data fed into them was inconsistent, incomplete, or ungoverned.

Here is what ungoverned data does to AI systems:

- Hallucination at scale. An AI agent querying ungoverned data will return answers that look correct but are based on conflicting definitions. There is no error message. The output just quietly diverges from reality.

- Broken trust. One wrong AI-generated report to the board and the entire initiative loses credibility. Rebuilding trust takes quarters. Losing it takes one meeting.

- Wasted compute. Training models on dirty data does not produce insights. It produces expensive noise. Companies with mature data governance see 24% higher revenue from AI initiatives according to IDC.

- Compliance exposure. AI systems that process personal data without governance create GDPR, CCPA, and AI Act liability. The regulatory surface area grows with every ungoverned dataset.

The pattern repeats across industries. In 2018, companies hired Data Scientists who spent 80% of their time cleaning data. In 2022, dashboards showed conflicting numbers because nobody governed the metric definitions. In 2026, AI agents are making decisions on data that one engineer understands. Different era, same root cause.

How data governance for AI works

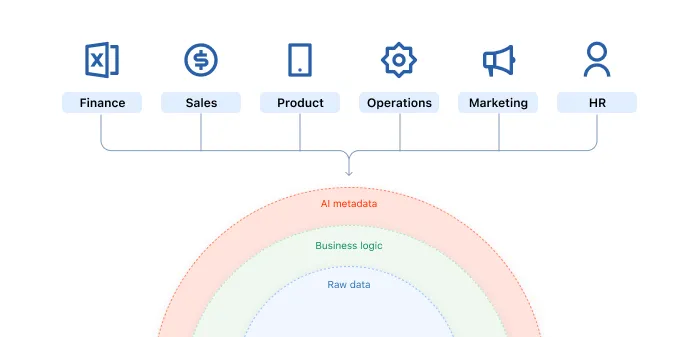

Effective data governance for AI operates across four layers. Skip one and the layers above it become unreliable.

Layer 1: Data foundation

This is where data enters, gets stored, and becomes governable. Ingestion pipelines, warehousing, data quality checks, and schema validation. The test is simple: can three different people in your company run the same query and get the same number? If not, this layer is broken.

Layer 2: Semantic layer

Business logic translated for machines. Revenue, active customer, churn rate. Every metric gets one governed definition that every downstream tool and every AI agent relies on. Without this, your AI does not understand your business. It understands your data. Those are not the same thing.

Learn more about how a semantic layer enables AI readiness and why it is the most underinvested layer in most modern data stacks.

Layer 3: Orchestration

Data pipelines, CRM syncs, reverse ETL, API integrations. This is the nervous system that routes governed data to the right place at the right time. Governance here means knowing what data moves where, on what schedule, and what breaks when a source changes.

Layer 4: AI layer

Models, agents, and automations consume everything below. This layer only works if Layers 1 through 3 are governed. An AI agent on an ungoverned stack is a confident liability.

Common mistakes companies make

Starting at Layer 4

The most common mistake is deploying AI before governing the data it consumes. Executives get excited about agents and chatbots. Nobody gets promoted for fixing a data pipeline. But the pipeline is where trust is built or lost.

Treating governance as a one-time project

Data governance is not a migration. It is a continuous discipline. Data sources change, business definitions evolve, new teams start producing data. Governance that was accurate six months ago may be wrong today.

Relying on tribal knowledge

62% of organizations report incomplete data. 58% cite capture inconsistencies. In most companies, the critical knowledge about data relationships and edge cases lives in one person's head. When that person leaves, the governance leaves with them. AI does not tolerate undocumented assumptions.

Who needs data governance for AI

If you are deploying AI agents, building predictive models, or automating decisions with data, you need data governance for AI. Specifically:

- Companies with 30 to 500 employees scaling beyond the point where one person can hold the entire data model in their head.

- Teams running dbt, Snowflake, BigQuery, or Looker that have the infrastructure but lack the governance layer on top.

- Organizations where AI pilots succeeded but production deployment stalled because data quality issues surfaced at scale.

- Data leaders reporting to the board on AI ROI who need confidence that the numbers their models produce are trustworthy.

Only 15% of organizations have mature data governance. That means 85% are building AI on a foundation they cannot fully trust. See how leading organizations are closing this gap in our AI readiness checklist.

How Unwind Data approaches this

At Unwind Data, we build data governance from the bottom up. Layer 1 before Layer 4. Foundation before agents. We have done this across fintech, e-commerce, SaaS, and sustainability, from early-stage startups to scaling platforms handling millions of transactions.

Our approach is provider-agnostic and built on the modern data stack: Snowflake, dbt, Fivetran, Looker, Omni. We implement the semantic layer that gives your AI a single source of truth, the quality checks that catch problems before they reach a model, and the orchestration that keeps governed data flowing to every system that needs it.

For every dollar companies spend on AI, six should go to the data architecture underneath it. We help you allocate that investment correctly.

More on Data Governance

Get actionable insights on this topic — and more — straight to your inbox. No fluff.

Unwind Data

Speak with a data expert

We've helped scale-ups and enterprises move faster on exactly this kind of work — without the trial and error. Strategy, architecture, and hands-on delivery.

Schedule a consultation